The RTX Pro 6000 Blackwell is designed for high-demand workloads that require both performance and reliability at scale. Deployed on 1Legion’s dedicated bare metal infrastructure, it enables organizations to run AI training, inference, rendering, and real-time media pipelines with consistent throughput, predictable costs, and full control over their environment.

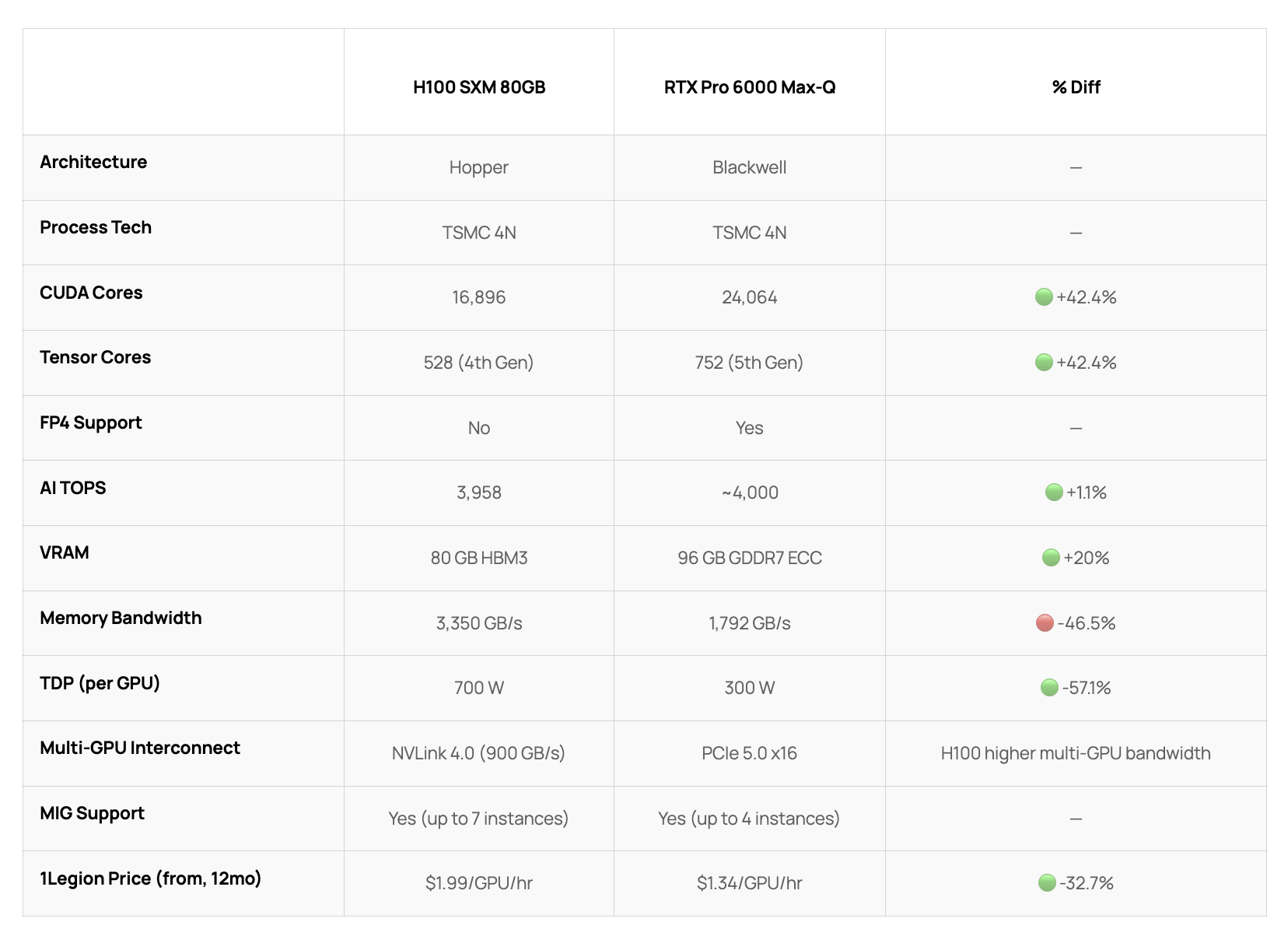

Per-GPU specs. Both available as dedicated 8-GPU bare metal servers on 1Legion

Real-time transcoding, playout, and low-latency streaming powered by the RTX Pro 6000 Blackwell.

Train and deploy large models with consistent performance and predictable infrastructure behavior.

Handle complex scenes, high-resolution rendering, and large-scale production workloads without bottlenecks.

No. All 1Legion GPU instances are available as full bare metal servers only. Minimum rental is the complete 8-GPU machine, ensuring dedicated resources, full memory bandwidth, and no shared infrastructure.

Minimum commitment is 1 month. 12-month and 24-month terms are available at lower per-GPU-hour rates.

Pricing is per GPU per hour, billed for the full 8-GPU server. There are no egress fees, no hidden storage charges, and no variable performance pricing.

Each 1Legion GPU instance page includes a detailed spec comparison against reference hardware. Pricing, VRAM, compute throughput, and workload fit vary by model, see the comparison table on each page for specifics.

Tell us about your workload. Our team will match you with the right server configuration and reach out shortly.